𝗔𝗺𝗮𝘇𝗼𝗻 𝗘𝗞𝗦 𝗣𝗿𝗼𝘃𝗶𝘀𝗶𝗼𝗻𝗲𝗱 𝗖𝗼𝗻𝘁𝗿𝗼𝗹 𝗣𝗹𝗮𝗻𝗲: 𝗔 𝗣𝗿𝗮𝗰𝘁𝗶𝗰𝗮𝗹 𝗚𝘂𝗶𝗱𝗲 𝗳𝗼𝗿 𝗞𝘂𝗯𝗲𝗿𝗻𝗲𝘁𝗲𝘀 𝗔𝗿𝗰𝗵𝗶𝘁𝗲𝗰𝘁𝘀

𝗪𝗵𝘆 “𝗳𝗿𝗲𝗲” control planes are no longer enough at scale

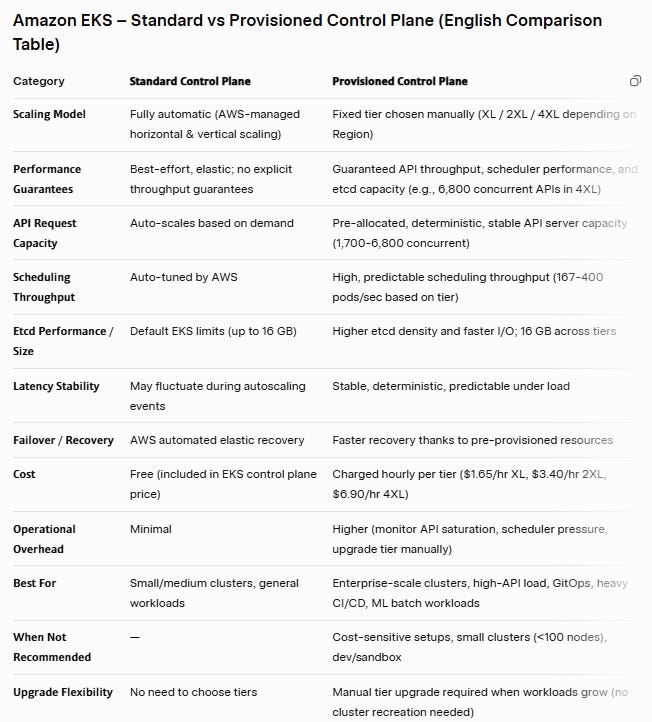

In most EKS 𝗱𝗶𝘀𝗰𝘂𝘀𝘀𝗶𝗼𝗻𝘀, the 𝗰𝗼𝗻𝘁𝗿𝗼𝗹 𝗽𝗹𝗮𝗻𝗲 is treated as “𝘵𝘩𝘦 𝘮𝘢𝘯𝘢𝘨𝘦𝘥 𝘱𝘢𝘳𝘵 𝘸𝘦 𝘥𝘰𝘯’𝘵 𝘩𝘢𝘷𝘦 𝘵𝘰 𝘵𝘩𝘪𝘯𝘬 𝘢𝘣𝘰𝘶𝘵”. For small and 𝗺𝗲𝗱𝗶𝘂𝗺-𝘀𝗶𝘇𝗲𝗱 clusters, that mental model still works: the 𝗦𝘁𝗮𝗻𝗱𝗮𝗿𝗱 EKS control plane mode 𝗮𝘂𝘁𝗼-𝘀𝗰𝗮𝗹𝗲𝘀 𝗶𝗻 𝘁𝗵𝗲 𝗯𝗮𝗰𝗸𝗴𝗿𝗼𝘂𝗻𝗱 and quietly does its job.

But as 𝗰𝗹𝘂𝘀𝘁𝗲𝗿𝘀 𝗴𝗿𝗼𝘄, workloads become more 𝗰𝗼𝗺𝗽𝗹𝗲𝘅, and organizations start consolidating 𝗺𝘂𝗹𝘁𝗶𝗽𝗹𝗲 𝘁𝗲𝗮𝗺𝘀 onto 𝘀𝗵𝗮𝗿𝗲𝗱 𝗘𝗞𝗦 platforms, the control plane itself becomes a strategic capacity constraint. At some point, “𝘣𝘦𝘴𝘵-𝘦𝘧𝘧𝘰𝘳𝘵 𝘮𝘢𝘯𝘢𝘨𝘦𝘥” is 𝗻𝗼 𝗹𝗼𝗻𝗴𝗲𝗿 𝗲𝗻𝗼𝘂𝗴𝗵, you need 𝗴𝘂𝗮𝗿𝗮𝗻𝘁𝗲𝗲𝗱 and 𝗽𝗿𝗲𝗱𝗶𝗰𝘁𝗮𝗯𝗹𝗲 performance.

𝗧𝗵𝗮𝘁’𝘀 exactly the problem the new 𝗔𝗺𝗮𝘇𝗼𝗻 𝗘𝗞𝗦 𝗣𝗿𝗼𝘃𝗶𝘀𝗶𝗼𝗻𝗲𝗱 𝗖𝗼𝗻𝘁𝗿𝗼𝗹 𝗣𝗹𝗮𝗻𝗲 is designed to solve.

𝗪𝗵𝗮𝘁 𝘁𝗵𝗲 𝗣𝗿𝗼𝘃𝗶𝘀𝗶𝗼𝗻𝗲𝗱 𝗖𝗼𝗻𝘁𝗿𝗼𝗹 𝗣𝗹𝗮𝗻𝗲 𝗮𝗰𝘁𝘂𝗮𝗹𝗹𝘆 𝗰𝗵𝗮𝗻𝗴𝗲𝘀

In 𝗦𝘁𝗮𝗻𝗱𝗮𝗿𝗱 mode, AWS dynamically adjusts control plane resources 𝗯𝗮𝘀𝗲𝗱 𝗼𝗻 𝗹𝗼𝗮𝗱. You don’t see the underlying instances; you just 𝗴𝗲𝘁 𝗮𝗻 𝗔𝗣𝗜 𝗲𝗻𝗱𝗽𝗼𝗶𝗻𝘁 and an SLO. It’s simple, low-friction and 𝗶𝗱𝗲𝗮𝗹 𝗳𝗼𝗿 𝘁𝗵𝗲 𝗺𝗮𝗷𝗼𝗿𝗶𝘁𝘆 of clusters.

The 𝗣𝗿𝗼𝘃𝗶𝘀𝗶𝗼𝗻𝗲𝗱 𝗖𝗼𝗻𝘁𝗿𝗼𝗹 𝗣𝗹𝗮𝗻𝗲 introduces a different model:

• You pick a 𝗽𝗲𝗿𝗳𝗼𝗿𝗺𝗮𝗻𝗰𝗲 𝘁𝗶𝗲𝗿 (XL, 2XL, 4XL, depending on region).

• Each tier comes with documented, 𝗴𝘂𝗮𝗿𝗮𝗻𝘁𝗲𝗲𝗱 throughput characteristics:

• 𝗠𝗮𝘅 𝗔𝗣𝗜 𝗰𝗼𝗻𝗰𝘂𝗿𝗿𝗲𝗻𝗰𝘆: XL=1,700, 2XL=3,400, 4XL=6,800.

• 𝗣𝗼𝗱 𝘀𝗰𝗵𝗲𝗱𝘂𝗹𝗶𝗻𝗴 𝗿𝟬𝘁𝗲: XL=167/sec, 2XL=283/sec, 4XL=400/sec.

• 𝗘𝘁𝗰𝗱 𝗱𝗮𝘁𝗮 𝘀𝗶𝘇𝗲: 16 GB across tiers.

• 𝗔𝗪𝗦 𝗽𝗿𝗲-𝗮𝗹𝗹𝗼𝗰𝗮𝘁𝗲𝘀 𝘁𝗵𝗮𝘁 𝗰𝗮𝗽𝗮𝗰𝗶𝘁𝘆 for your cluster’s control plane.

In other words, you replace some of the 𝗲𝗹𝗮𝘀𝘁𝗶𝗰𝗶𝘁𝘆 of the 𝗦𝘁𝗮𝗻𝗱𝗮𝗿𝗱 mode for 𝗱𝗲𝘁𝗲𝗿𝗺𝗶𝗻𝗶𝘀𝗺: the control plane behaves consistently under load because its 𝗰𝗮𝗽𝗮𝗰𝗶𝘁𝘆 𝗶𝘀 𝗿𝗲𝘀𝗲𝗿𝘃𝗲𝗱 𝘂𝗽 𝗳𝗿𝗼𝗻𝘁.

For Kubernetes architects, that means you can finally treat the control plane much more explicitly in your capacity planning, SLO definitions and platform SLAs.

𝗪𝗵𝗲𝗻 𝗦𝘁𝗮𝗻𝗱𝗮𝗿𝗱 𝗺𝗼𝗱𝗲 𝗶𝘀 𝘀𝘁𝗶𝗹𝗹 𝘁𝗵𝗲 𝗿𝗶𝗴𝗵𝘁 𝗰𝗵𝗼𝗶𝗰𝗲

Before we get into the benefits, it’s worth clarifying: there’s no point in over-engineering a control layer that the workload doesn’t need.

You 𝘀𝗵𝗼𝘂𝗹𝗱 almost certainly 𝘀𝘁𝗮𝘆 on 𝗦𝘁𝗮𝗻𝗱𝗮𝗿𝗱 mode if:

• Your clusters are 𝘀𝗺𝗮𝗹𝗹 𝘁𝗼 𝗺𝗶𝗱-𝘀𝗶𝘇𝗲𝗱 (tens of nodes, not hundreds).

• 𝗔𝗣𝗜 𝘁𝗿𝗮𝗳𝗳𝗶𝗰 𝗶𝘀 𝗺𝗼𝗱𝗲𝗿𝗮𝘁𝗲 and mostly human-driven (kubectl, GitOps, a few controllers).

• You 𝗱𝗼𝗻’𝘁 𝗿𝘂𝗻 𝗹𝗮𝗿𝗴𝗲-𝘀𝗰𝗮𝗹𝗲 𝗯𝗮𝘁𝗰𝗵, 𝗠𝗟, or massively multi-tenant workloads.

• 𝗖𝗼𝘀𝘁 𝗼𝗽𝘁𝗶𝗺𝗶𝘇𝗮𝘁𝗶𝗼𝗻 𝗶𝘀 𝗺𝗼𝗿𝗲 𝗶𝗺𝗽𝗼𝗿𝘁𝗮𝗻t than squeezing out maximum throughput.

• You 𝗱𝗼𝗻’𝘁 𝗵𝗮𝘃𝗲 𝘀𝘁𝗿𝗼𝗻𝗴 𝗶𝗻𝘁𝗲𝗿𝗻𝗮𝗹 𝗦𝗟𝗔𝘀 around API latency or scheduling guarantees.

In these environments, 𝗦𝘁𝗮𝗻𝗱𝗮𝗿𝗱 mode gives you:

• 𝗭𝗲𝗿𝗼 extra control-plane 𝗰𝗼𝘀𝘁.

• 𝗠𝗶𝗻𝗶𝗺𝗮𝗹 operational 𝗼𝘃𝗲𝗿𝗵𝗲𝗮𝗱.

• 𝗦𝘂𝗳𝗳𝗶𝗰𝗶𝗲𝗻𝘁 𝗲𝗹𝗮𝘀𝘁𝗶𝗰𝗶𝘁𝘆 for typical day-to-day load patterns.

If this is you, the best “optimization” is usually good cluster hygiene: reasonable pod and node counts, avoiding API-abusive controllers, and right-sized node groups.

𝗪𝗵𝗲𝗿𝗲 𝘁𝗵𝗲 𝗣𝗿𝗼𝘃𝗶𝘀𝗶𝗼𝗻𝗲𝗱 𝗖𝗼𝗻𝘁𝗿𝗼𝗹 𝗣𝗹𝗮𝗻𝗲 𝘀𝗵𝗶𝗻𝗲𝘀

The 𝗣𝗿𝗼𝘃𝗶𝘀𝗶𝗼𝗻𝗲𝗱 𝗖𝗼𝗻𝘁𝗿𝗼𝗹 𝗣𝗹𝗮𝗻𝗲 starts to make sense once you hit one or more of these patterns:

1. 𝗛𝗶𝗴𝗵 𝗔𝗣𝗜-𝗶𝗻𝘁𝗲𝗻𝘀𝗶𝘃𝗲 𝗮𝘂𝘁𝗼𝗺𝗮𝘁𝗶𝗼𝗻

You 𝗿𝘂𝗻 𝗺𝘂𝗹𝘁𝗶𝗽𝗹𝗲 𝗰𝗼𝗻𝘁𝗿𝗼𝗹𝗹𝗲𝗿𝘀 that 𝗵𝗮𝗺𝗺𝗲𝗿 the 𝗔𝗣𝗜 server:

• 𝗚𝗶𝘁𝗢𝗽𝘀 𝗲𝗻𝗴𝗶𝗻𝗲𝘀 (Argo CD / Flux) syncing many applications across namespaces.

• Cluster Autoscaler or Karpenter 𝗮𝗴𝗴𝗿𝗲𝘀𝘀𝗶𝘃𝗲𝗹𝘆 𝘀𝗰𝗮𝗹𝗶𝗻𝗴 node groups.

• 𝗢𝗽𝗲𝗿𝗮𝘁𝗼𝗿𝘀 that reconcile 𝗹𝗮𝗿𝗴𝗲 𝗖𝗥𝗗 graphs in tight loops.

In these setups, the 𝗰𝗼𝗻𝘁𝗿𝗼𝗹 𝗽𝗹𝗮𝗻𝗲 is effectively a 𝗰𝗲𝗻𝘁𝗿𝗮𝗹 𝗺𝗲𝘀𝘀𝗮𝗴𝗲 𝗯𝘂𝘀 for your platform. 𝗜𝗳 𝗔𝗣𝗜 𝗹𝗮𝘁𝗲𝗻𝗰𝘆 𝘀𝗽𝗶𝗸𝗲𝘀 or throughput is constrained, 𝗲𝘃𝗲𝗿𝘆𝘁𝗵𝗶𝗻𝗴 (deployments, scaling, health checks) 𝘀𝗹𝗼𝘄𝘀 𝗱𝗼𝘄𝗻 or 𝗯𝗲𝗰𝗼𝗺𝗲𝘀 𝘂𝗻𝘀𝘁𝗮𝗯𝗹𝗲.

A 𝗣𝗿𝗼𝘃𝗶𝘀𝗶𝗼𝗻𝗲𝗱 𝗖𝗼𝗻𝘁𝗿𝗼𝗹 𝗣𝗹𝗮𝗻𝗲 𝗴𝗶𝘃𝗲𝘀 you a hard 𝗽𝗲𝗿𝗳𝗼𝗿𝗺𝗮𝗻𝗰𝗲 floor for that bus.

2. 𝗟𝗮𝗿𝗴𝗲-𝘀𝗰𝗮𝗹𝗲, 𝗺𝘂𝗹𝘁𝗶-𝘁𝗲𝗻𝗮𝗻𝘁 𝗰𝗹𝘂𝘀𝘁𝗲𝗿𝘀

If you’re running:

• 𝗵𝘂𝗻𝗱𝗿𝗲𝗱𝘀 of nodes (up to 40,000 in 4XL),

• 𝘁𝗲𝗻𝘀 of 𝘁𝗵𝗼𝘂𝘀𝗮𝗻𝗱𝘀 of 𝗽𝗼𝗱𝘀 (up to 640,000),

• and 𝗺𝘂𝗹𝘁𝗶𝗽𝗹𝗲 𝘁𝗲𝗮𝗺𝘀 / tenants on a 𝘀𝗵𝗮𝗿𝗲𝗱 𝗰𝗹𝘂𝘀𝘁𝗲𝗿,

then the control plane becomes a shared 𝗯𝗼𝘁𝘁𝗹𝗲𝗻𝗲𝗰𝗸. When 𝗼𝗻𝗲 𝘁𝗲𝗻𝗮𝗻𝘁 performs a 𝗺𝗮𝘀𝘀𝗶𝘃𝗲 𝗱𝗲𝗽𝗹𝗼𝘆𝗺𝗲𝗻𝘁 or runs a CI/CD storm, 𝗲𝘃𝗲𝗿𝘆𝗼𝗻𝗲 𝗲𝗹𝘀𝗲 𝗳𝗲𝗲𝗹𝘀 it.

With a 𝗣𝗿𝗼𝘃𝗶𝘀𝗶𝗼𝗻𝗲𝗱 𝗖𝗼𝗻𝘁𝗿𝗼𝗹 𝗣𝗹𝗮𝗻𝗲, you can:

• 𝗥𝗲𝘀𝗲𝗿𝘃𝗲 enough headroom to absorb tenant spikes.

• Back your “platform SLOs” with real, 𝗴𝘂𝗮𝗿𝗮𝗻𝘁𝗲𝗲𝗱 𝗰𝗮𝗽𝗮𝗰𝗶𝘁𝘆.

• 𝗥𝗲𝗱𝘂𝗰𝗲 𝗻𝗼𝗶𝘀𝘆-𝗻𝗲𝗶𝗴𝗵𝗯𝗼𝗿 effects at the control-plane level.

This 𝗱𝗼𝗲𝘀𝗻’𝘁 𝗿𝗲𝗽𝗹𝗮𝗰𝗲 𝘄𝗼𝗿𝗸𝗹𝗼𝗮𝗱-𝗹𝗲𝘃𝗲𝗹 𝗶𝘀𝗼𝗹𝗮𝘁𝗶𝗼𝗻 (namespaces, quotas, network policies), but it removes one major shared choke point.

3. 𝗛𝗲𝗮𝘃𝘆 𝘀𝗰𝗵𝗲𝗱𝘂𝗹𝗶𝗻𝗴 𝘄𝗼𝗿𝗸𝗹𝗼𝗮𝗱𝘀 (𝗔𝗜/𝗠𝗟, 𝗯𝗮𝘁𝗰𝗵, 𝗱𝗮𝘁𝗮)

Workloads like:

• large 𝗠𝗟 𝘁𝗿𝗮𝗶𝗻𝗶𝗻𝗴 jobs with many 𝘀𝗵𝗼𝗿𝘁-𝗹𝗶𝘃𝗲𝗱 𝗽𝗼𝗱𝘀,

• Spark or Flink jobs on Kubernetes,

• or 𝗶𝗻𝘁𝗲𝗿𝗻𝗮𝗹 𝗯𝗮𝘁𝗰𝗵 pipelines

𝗴𝗲𝗻𝗲𝗿𝗮𝘁𝗲 𝗶𝗻𝘁𝗲𝗻𝘀𝗲 𝘀𝗰𝗵𝗲𝗱𝘂𝗹𝗶𝗻𝗴 𝗽𝗿𝗲𝘀𝘀𝘂𝗿𝗲. The 𝘀𝗰𝗵𝗲𝗱𝘂𝗹𝗲𝗿’𝘀 𝗮𝗯𝗶𝗹𝗶𝘁𝘆 to process pod events and place them 𝗾𝘂𝗶𝗰𝗸𝗹𝘆 𝗯𝗲𝗰𝗼𝗺𝗲𝘀 𝗰𝗿𝗶𝘁𝗶𝗰𝗮𝗹 to job throughput.

𝗣𝗿𝗼𝘃𝗶𝘀𝗶𝗼𝗻𝗲𝗱 Control Plane tiers are explicitly 𝘁𝘂𝗻𝗲𝗱 𝗳𝗼𝗿 𝗵𝗶𝗴𝗵𝗲𝗿 𝘀𝗰𝗵𝗲𝗱𝘂𝗹𝗶𝗻𝗴 throughput, which translates into:

• faster batch job ramp-up,

• better cluster utilization,

• and more predictable completion times for time-sensitive jobs.

𝗧𝗿𝗮𝗱𝗲-𝗼𝗳𝗳𝘀: 𝘄𝗵𝗮𝘁 𝘆𝗼𝘂 𝗴𝗶𝘃𝗲 𝘂𝗽

The benefits are real, but so are the trade-offs:

1. 𝗔𝗱𝗱𝗶𝘁𝗶𝗼𝗻𝗮𝗹 𝗰𝗼𝘀𝘁

• 𝗣𝗿𝗼𝘃𝗶𝘀𝗶𝗼𝗻𝗲𝗱 Control Plane tiers are 𝗯𝗶𝗹𝗹𝗲𝗱 𝗼𝗻 𝘁𝗼𝗽 𝗼𝗳 the 𝘂𝘀𝘂𝗮𝗹 𝗘𝗞𝗦 𝗰𝗼𝗻𝘁𝗿𝗼𝗹-𝗽𝗹𝗮𝗻𝗲 𝗽𝗿𝗶𝗰𝗶𝗻𝗴 ($1.65/hr XL, $3.40/hr 2XL, $6.90/hr 4XL).

• As an architect, you need to treat this as a platform cost center and justify it in terms of:

• reduced incidents,

• improved SLOs,

• and higher workload density.

2. 𝗡𝗼 𝗮𝘂𝘁𝗼𝗺𝗮𝘁𝗶𝗰 𝗰𝗼𝗻𝘁𝗿𝗼𝗹-𝗽𝗹𝗮𝗻𝗲 𝗮𝘂𝘁𝗼𝘀𝗰𝗮𝗹𝗶𝗻𝗴

• You choose the tier.

• If your usage grows beyond it, 𝘆𝗼𝘂 𝗵𝗮𝘃𝗲 𝘁𝗼 𝗱𝗲𝗰𝗶𝗱𝗲 𝘄𝗵𝗲𝗻 𝘁𝗼 𝘀𝗰𝗮𝗹𝗲 𝘂𝗽.

• That means setting up 𝗺𝗼𝗻𝗶𝘁𝗼𝗿𝗶𝗻𝗴 𝗮𝗻𝗱 𝗰𝗮𝗽𝗮𝗰𝗶𝘁𝘆 𝘁𝗵𝗿𝗲𝘀𝗵𝗼𝗹𝗱𝘀 for:

• API saturation,

• request latency,

• scheduler queue depth,

• and etcd utilization (via CloudWatch/Prometheus metrics).

3. 𝗢𝗽𝗲𝗿𝗮𝘁𝗶𝗼𝗻𝗮𝗹 𝗿𝗲𝘀𝗽𝗼𝗻𝘀𝗶𝗯𝗶𝗹𝗶𝘁𝘆

• You gain control, but also responsibility.

• 𝗖𝗮𝗽𝗮𝗰𝗶𝘁𝘆 𝗽𝗹𝗮𝗻𝗻𝗶𝗻𝗴 𝗮𝗻𝗱 𝗿𝗲𝘃𝗶𝗲𝘄𝗶𝗻𝗴 control-plane 𝗺𝗲𝘁𝗿𝗶𝗰𝘀 𝘀𝗵𝗼𝘂𝗹𝗱 become part of your 𝗿𝗲𝗴𝘂𝗹𝗮𝗿 𝗽𝗹𝗮𝘁𝗳𝗼𝗿𝗺 𝗼𝗽𝗲𝗿𝗮𝘁𝗶𝗼𝗻𝘀 𝗿𝗶𝘁𝘂𝗮𝗹𝘀 .

For 𝗺𝗮𝗻𝘆 𝘀𝗺𝗮𝗹𝗹 𝗰𝗹𝘂𝘀𝘁𝗲𝗿𝘀, these trade-offs are 𝗻𝗼𝘁 𝘄𝗼𝗿𝘁𝗵 𝗶𝘁. For 𝗹𝗮𝗿𝗴𝗲 𝗽𝗹𝗮𝘁𝗳𝗼𝗿𝗺𝘀, they’re the 𝗽𝗿𝗶𝗰𝗲 𝗼𝗳 𝗽𝗿𝗲𝗱𝗶𝗰𝘁𝗮𝗯𝗶𝗹𝗶𝘁𝘆.

𝗔 𝘀𝗶𝗺𝗽𝗹𝗲 𝗱𝗲𝗰𝗶𝘀𝗶𝗼𝗻 𝗳𝗿𝗮𝗺𝗲𝘄𝗼𝗿𝗸 𝗳𝗼𝗿 𝗘𝗞𝗦 𝗮𝗿𝗰𝗵𝗶𝘁𝗲𝗰𝘁𝘀

You can treat the decision as a 𝘀𝗲𝗿𝗶𝗲𝘀 𝗼𝗳 𝗾𝘂𝗲𝘀𝘁𝗶𝗼𝗻𝘀:

1. Is 𝗔𝗣𝗜 𝘁𝗵𝗿𝗼𝘂𝗴𝗵𝗽𝘂𝘁 𝗼𝗿 𝘀𝗰𝗵𝗲𝗱𝘂𝗹𝗶𝗻𝗴 already a 𝗽𝗿𝗼𝗯𝗹𝗲𝗺?

• No → Stay on 𝗦𝘁𝗮𝗻𝗱𝗮𝗿𝗱.

• Yes → 𝗠𝗲𝗮𝘀𝘂𝗿𝗲. If you’re consistently hitting limitations, consider 𝗣𝗿𝗼𝘃𝗶𝘀𝗶𝗼𝗻𝗲𝗱.

2. 𝗛𝗼𝘄 𝗺𝗮𝗻𝘆 𝗻𝗼𝗱𝗲𝘀 / 𝗽𝗼𝗱𝘀 are we 𝘁𝗮𝗿𝗴𝗲𝘁𝗶𝗻𝗴 per cluster 𝗼𝘃𝗲𝗿 the 𝗻𝗲𝘅𝘁 12–18 𝗺𝗼𝗻𝘁𝗵𝘀?

• < 100 nodes, a few thousand pods → 𝗦𝘁𝗮𝗻𝗱𝗮𝗿𝗱 is usually fine.

• Hundreds of nodes (up to 40,000), 10k+ pods (up to 640,000), or consolidated multi-tenant platforms → 𝗣𝗿𝗼𝘃𝗶𝘀𝗶𝗼𝗻𝗲𝗱 becomes compelling.

3. Do we 𝗵𝗮𝘃𝗲 𝗶𝗻𝘁𝗲𝗿𝗻𝗮𝗹 𝗦𝗟𝗔𝘀 that 𝗱𝗲𝗽𝗲𝗻𝗱 on 𝗰𝗼𝗻𝘁𝗿𝗼𝗹-𝗽𝗹𝗮𝗻𝗲 behavior?

• If yes (for example, “𝘥𝘦𝘱𝘭𝘰𝘺𝘮𝘦𝘯𝘵 𝘭𝘢𝘵𝘦𝘯𝘤𝘺”, “𝘢𝘶𝘵𝘰𝘴𝘤𝘢𝘭𝘪𝘯𝘨 𝘳𝘦𝘢𝘤𝘵𝘪𝘰𝘯 𝘵𝘪𝘮𝘦”), 𝗣𝗿𝗼𝘃𝗶𝘀𝗶𝗼𝗻𝗲𝗱 helps turn those SLAs into something you can actually stand behind.

4. 𝗖𝗮𝗻 𝘄𝗲 𝗷𝘂𝘀𝘁𝗶𝗳𝘆 𝘁𝗵𝗲 𝗲𝘅𝘁𝗿𝗮 𝘀𝗽𝗲𝗻𝗱 with platform-level benefits?

• Fewer control-plane incidents.

• Higher node/pod density.

• More stable experience for internal teams.

If the answer to most of these is “yes”, 𝗣𝗿𝗼𝘃𝗶𝘀𝗶𝗼𝗻𝗲𝗱 Control Plane is worth serious consideration.

𝗛𝗼𝘄 𝘁𝗼 𝗽𝗿𝗲𝘀𝗲𝗻𝘁 𝘁𝗵𝗶𝘀 𝘁𝗼 𝘆𝗼𝘂𝗿 𝘀𝘁𝗮𝗸𝗲𝗵𝗼𝗹𝗱𝗲𝗿𝘀

𝗔𝘀 𝗮 𝗞𝘂𝗯𝗲𝗿𝗻𝗲𝘁𝗲𝘀 / 𝗔𝗪𝗦 𝗮𝗿𝗰𝗵𝗶𝘁𝗲𝗰𝘁, your job is not just to understand the feature, but to 𝗲𝘅𝗽𝗹𝗮𝗶𝗻 𝗶𝘁 𝗶𝗻 𝗯𝘂𝘀𝗶𝗻𝗲𝘀𝘀 𝘁𝗲𝗿𝗺𝘀:

• 𝗦𝘁𝗮𝗻𝗱𝗮𝗿𝗱 EKS control planes are like a managed, elastic shared service: cheap, simple, “good enough” most of the time.

• 𝗣𝗿𝗼𝘃𝗶𝘀𝗶𝗼𝗻𝗲𝗱 Control Plane is like reserving dedicated capacity for the brain of your platform:

• You pay for it.

• In return, you get stability, predictability and the ability to consolidate more workloads without fear.

That’s usually the 𝗿𝗶𝗴𝗵𝘁 𝗻𝗮𝗿𝗿𝗮𝘁𝗶𝘃𝗲 𝗳𝗼𝗿:

• internal platform boards,

• enterprise architects,

• and security / risk stakeholders who care about predictable behavior.

𝗖𝗹𝗼𝘀𝗶𝗻𝗴 𝘁𝗵𝗼𝘂𝗴𝗵𝘁𝘀

The 𝗣𝗿𝗼𝘃𝗶𝘀𝗶𝗼𝗻𝗲𝗱 Control Plane is 𝗻𝗼𝘁 𝗮 𝗳𝗲𝗮𝘁𝘂𝗿𝗲 that 𝗲𝘃𝗲𝗿𝘆 𝗘𝗞𝗦 𝗰𝘂𝘀𝘁𝗼𝗺𝗲𝗿 𝘄𝗶𝗹𝗹 𝗻𝗲𝗲𝗱. 𝗕𝘂𝘁 if you are treating 𝗘𝗞𝗦 𝗮𝘀 𝗮 𝘀𝘁𝗿𝗮𝘁𝗲𝗴𝗶𝗰 𝗽𝗹𝗮𝘁𝗳𝗼𝗿𝗺 (not just a “cluster for a project”) then your control plane deserves to be treated as a 𝗳𝗶𝗿𝘀𝘁-𝗰𝗹𝗮𝘀𝘀 𝗰𝗮𝗽𝗮𝗰𝗶𝘁𝘆 𝗮𝗻𝗱 𝗦𝗟𝗢 𝗼𝗯𝗷𝗲𝗰𝘁.

This 𝗻𝗲𝘄 𝗺𝗼𝗱𝗲 𝗴𝗶𝘃𝗲𝘀 𝘆𝗼𝘂 exactly that: the 𝗮𝗯𝗶𝗹𝗶𝘁𝘆 to deliberately 𝗱𝗲𝘀𝗶𝗴𝗻, 𝘀𝗶𝘇𝗲 and 𝗷𝘂𝘀𝘁𝗶𝗳𝘆 your 𝗰𝗼𝗻𝘁𝗿𝗼𝗹 𝗽𝗹𝗮𝗻𝗲, instead of simply hoping the “managed” part will always keep up.

𝗘𝘅𝗽𝗹𝗼𝗿𝗲 further with 𝗼𝗳𝗳𝗶𝗰𝗶𝗮𝗹 AWS documentation:

𝗔𝗻𝗻𝗼𝘂𝗻𝗰𝗲𝗺𝗲𝗻𝘁: https://aws.amazon.com/blogs/containers/amazon-eks-introduces-provisioned-control-plane/

𝗣𝗿𝗶𝗰𝗶𝗻𝗴: https://aws.amazon.com/eks/pricing

If you are interested in more free Kubernetes, AI, and AWS content, visit our learning portal!

#EKS #Kubernetes #AWS #DevOps #PlatformEngineering